Matrix

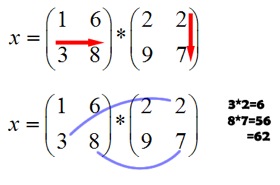

In mathematics, a matrix (plural matrices, or less commonly matrixes) is a rectangular array of numbers. This way, matrices can record other data that depend on multiple parameters. In particular they are used to keep track of the coefficients of multiple linear equations. Matrices are closely connected to linear transformations, which are higher-dimensional analogs of linear functions, i.e., functions of the form f(x) = c · x, where c is a constant. This map corresponds to a matrix with one row and column, with entry c. In addition to a number of elementary, entrywise operations such as matrix addition a key notion is matrix multiplication, which displays a number of features not encountered in numbers; for example, products of matrices depend on the order of the factors, unlike products of real numbers, say, where [[commutativity|c - d = d - c for any two numbers c and d.

In the particular case of square matrices, matrices with equal number of columns and rows, more refined data are attached to matrices, notably the determinant, inverse matrices, which both govern solution properties of the system of linear equation belonging to the matrix, and eigenvalues and eigenvectors.

Applications

Matrices find many applications. Physics makes use of them in various domains, for example in geometrical optics and matrix mechanics. The latter also led to studying in more detail matrices with an infinite number of rows and columns. Matrices encoding distances of knot points in a graph, such as cities connected by roads, are used in graph theory, and computer graphics use matrices to encode projections of three-dimensional space onto a two-dimensional screen. Matrix calculus generalizes classical analytical notions such as derivatives of functions or exponentials to matrices. The latter is a recurring need in solving ordinary differential equations.

Due to their widespread use, considerable effort has been made to develop efficient methods of matrix computing, particularly if the matrices are big. To this end, there are several matrix decomposition methods, which express matrices as products of other matrices with particular properties simplifying computations, both theoretically and practically. Sparse matrices, matrices which have few non-zero entries, which occur, for example, in simulating mechanical experiments using the finite element method, often allow for more specifically tailored algorithms performing these tasks.

Matrices are described by the field of matrix theory. The close relationship of matrices with linear transformations makes the former a key notion of linear algebra. Other types of entries, such as elements in more general mathematical fields or even |rings are also used. Matrices consisting of only one column or row are called vectors, while higher-dimensional, e.g. three-dimensional, arrays of numbers are called tensors.

Definition

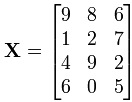

A matrix is a rectangular arrangement of numbers. For example,

alternatively denoted using parentheses instead of box brackets:

The horizontal and vertical lines in a matrix are called rows and columns, respectively. The numbers in the matrix are called its entries. To specify a matrix's size, a matrix with m rows and n columns is called an m-by-n matrix or m×n matrix, while m and n are called its dimensions. The above is a 4-by-3 matrix.

A matrix where one of the dimensions equals one is also called a vector, and may be interpreted as an element of real coordinate space. An m1 matrix (one column and m rows) is called a column vector and a 1n matrix (one row and n columns) is called a row vector. For example, the second row vector of the above matrix is

History

Matrices have a long history of application in solving linear equations. The Chinese mathematics text from between 300 BC and AD 200, The Nine Chapters on the Mathematical Art (Jiu Zhang Suan Shu), is the first example of the use of matrix methods to solve simultaneous equations including the concept of determinants, almost 2000 years before its publication by the Japanese mathematician Seki in 1683 and the German mathematician Leibniz in 1693. Later, Gabriel Cramer developed the theory further in the 18th century, presenting Cramer's rule in 1750. Gauss and Jordan developed Gauss-Jordan elimination in the 1800s.

The term "matrix" was coined in 1848 by J. J. Sylvester, but was only understood as an object giving rise to a number of determinants today called minors. Arthur Cayley, in his 1858 Memoir on the theory of matrices first used the term matrix in the modern sense, but proved little except what is today known as the Cayley-Hamilton theorem.. At that time, determinants played a more prominent role than matrices. The study of determinants sprang from several sources. Number-theoretical problems led Gauss to relate coefficients of quadratic forms and linear maps in three dimensions to matrices. Ferdinand Eisenstein further developed these notions, including the remark that, in modern parlance, matrix products are non-commutative. Augustin Cauchy was the first to prove general statements about determinants, using as definition of the determinant of a matrix ![]() the following: replace the powers

the following: replace the powers ![]() in the polynomial

in the polynomial

He also showed, in 1829, that the eigenvalues of symmetric matrices are real. Carl Gustav Jakob studied "functional determinants", later baptised Jacobi determinants by Sylvester; Leopold Kronecker's Vorlesungen über die Theorie der Determinanten' and Karl Weierstrass' Zur Determinantentheorie, both published in 1903, first gave an axiomatic treatment of determinants. At that point, determinants were firmly established.

Further workers on matrix theory include William Rowan Hamilton, Hermann Grassmann, Ferdinand Georg Frobenius and John von Neumann.

Basic operations

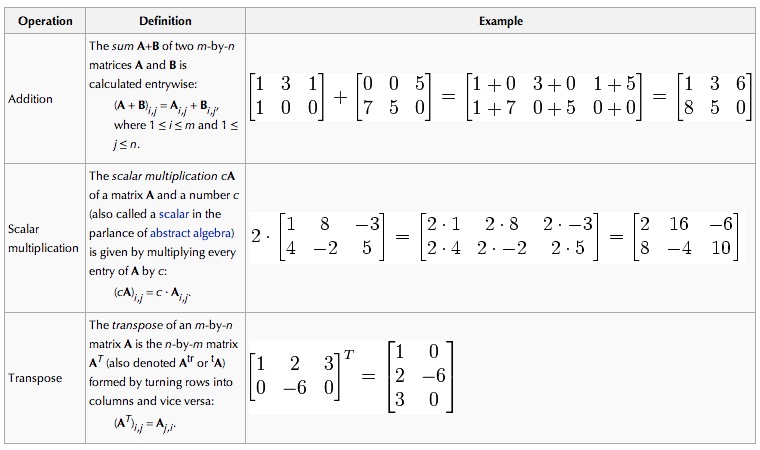

There are a number of operations that can be applied to modify matrices called matrix addition, scalar multiplication and transposition These form the basic techniques to deal with matrices.

Familiar properties of numbers extend to these operations of matrices: for example, addition is commutative, i.e. the matrix sum does not depend on the order of the summands: A + B = B + A. The transpose is compatible with addition and scalar multiplication, as expressed by (cA)T = c(AT) and (A + B)T = AT + BT. Finally, (AT)T = A.[1]

Familiar properties of numbers extend to these operations of matrices: for example, addition is commutative, i.e. the matrix sum does not depend on the order of the summands: A + B = B + A. The transpose is compatible with addition and scalar multiplication, as expressed by (cA)T = c(AT) and (A + B)T = AT + BT. Finally, (AT)T = A.[1]